Adventures with the Linux Command Line, by William Shotts, offers a comprehensive guide to mastering this powerful interface, perfect for beginners and experienced users alike.

What is the Linux Command Line?

The Linux command line, also known as the shell, is a text-based interface for interacting with your computer. Unlike graphical user interfaces (GUIs) with windows and icons, the command line requires you to type commands to instruct the system. It’s a direct pathway to your operating system’s core functionality.

This interface, detailed in resources like William Shotts’ work, provides a natural and expressive way to communicate with your computer. It’s a skill passed down through generations of tech enthusiasts, offering powerful control and automation capabilities. Mastering the command line unlocks efficient system administration, file management, and text processing, going beyond typical GUI limitations.

Why Learn the Command Line?

Learning the Linux command line, as explored in “Adventures with the Linux Command Line” by William Shotts, unlocks a wealth of benefits. It empowers you to administer your system effectively – managing networking, installing packages, and controlling processes. Automation becomes simple through shell scripting, eliminating repetitive tasks.

Beyond practicality, the command line fosters a deeper understanding of your operating system. You’ll gain timeless skills in file navigation, environment configuration, and pattern matching with regular expressions. It’s a powerful tool for makers, students, and anyone seeking efficient computing, offering a connection to the rich heritage of Unix systems.

Basic Commands for File and Directory Management

Essential commands like `mkdir`, `rm`, and `cp` allow you to create, delete, and copy files and directories, forming the foundation of Linux file management.

Navigating the File System: `pwd`, `cd`, `ls`

Understanding file system navigation is crucial. The `pwd` command displays your current working directory, providing context within the Linux hierarchy. `cd` (change directory) allows you to move between directories; simply type `cd directory_name` to enter it, or `cd ..` to go up one level.

The `ls` command lists the files and directories within your current location. Use `ls -l` for a detailed listing with permissions, ownership, and modification dates. Combining these commands – knowing where you are, and being able to move and view contents – forms the basis for effective command-line interaction. Mastering these tools unlocks efficient file management and system exploration.

Creating, Deleting, and Copying Files and Directories: `mkdir`, `rm`, `cp`

Essential file management involves creation, deletion, and duplication. The `mkdir directory_name` command creates new directories, organizing your file system. Be cautious with `rm` (remove), as it permanently deletes files and directories; use `rm -r directory_name` to remove a directory and its contents recursively.

To copy files, use `cp source_file destination_file`, and for directories, add the `-r` option for recursive copying (`cp -r source_directory destination_directory`). These commands, while powerful, require careful use to avoid accidental data loss. Understanding these basics is fundamental for system administration and automation.

Working with Files: `touch`, `cat`, `less`, `head`, `tail`

Several commands facilitate file interaction. `touch filename` creates an empty file or updates its timestamp. `cat filename` displays the entire file content, suitable for smaller files. For larger files, `less filename` allows for page-by-page viewing and searching.

`head filename` shows the beginning of a file (default: 10 lines), while `tail filename` displays the end. `tail -f filename` is particularly useful for monitoring log files in real-time. These tools are crucial for inspecting and understanding file contents efficiently from the command line.

Redirection and Pipelines

Mastering standard input/output, redirection (>, >>), and pipelines (|) empowers you to chain commands and manipulate data effectively within the Linux environment.

Understanding Standard Input, Output, and Error

Every command in Linux interacts with three fundamental data streams: standard input, standard output, and standard error. Standard input is where a command receives its data – often from the keyboard, but it can also be redirected from a file. Standard output is the primary channel for a command to display its results, typically appearing on your terminal screen.

However, commands can also encounter problems or generate diagnostic messages, which are sent to the standard error stream. Understanding these distinctions is crucial for effectively managing command execution and troubleshooting issues. Redirecting these streams allows for powerful control over data flow, enabling you to save output to files or process errors separately, enhancing your command-line efficiency.

Redirecting Output: `>`, `>>`

Linux provides powerful redirection operators to control where a command’s output is sent. The `>` operator redirects standard output to a file, overwriting the file if it already exists. This is useful for creating new files or replacing existing content with fresh results. Conversely, the `>>` operator appends standard output to a file, preserving existing content and adding the new output to the end.

These redirection tools are essential for automating tasks, logging command results, and building complex workflows. Mastering redirection allows you to seamlessly integrate commands and manage data flow, significantly enhancing your command-line productivity and scripting capabilities.

Piping Commands Together: `|`

The pipe operator (`|`) is a cornerstone of the Linux command line, enabling you to chain commands together. It takes the standard output of one command and feeds it as the standard input to the next. This allows for powerful data processing workflows, where each command performs a specific task in a sequence.

For example, you can combine `grep` to filter text with `sort` to arrange the results. This technique dramatically simplifies complex operations, making the command line a remarkably expressive and efficient tool. Mastering pipes unlocks the full potential of the Linux environment;

Text Processing with Command Line Tools

Tools like `grep`, `sort`, `cut`, and `paste` empower users to slice, dice, and manipulate text files efficiently from the command line.

Filtering Text: `grep`

The grep command is a powerful tool for searching plain-text data sets for lines matching a regular expression. It’s invaluable for quickly locating specific information within files or output streams. As highlighted in resources like “Adventures with the Linux Command Line,” mastering grep is fundamental to efficient command-line text processing.

grep’s functionality extends beyond simple keyword searches; it allows for complex pattern matching, enabling users to pinpoint lines based on intricate criteria. This capability is crucial when dealing with large log files or configuration data. Understanding grep unlocks the ability to extract relevant data, making it a cornerstone of system administration and data analysis tasks performed directly from the Linux terminal.

Sorting Text: `sort`

The sort command arranges text lines alphabetically or numerically, providing a structured view of data. It’s a fundamental tool for organizing information extracted from files or the output of other commands. Resources like “Adventures with the Linux Command Line” emphasize its importance in data manipulation.

sort offers various options for customization, including case-insensitive sorting, reverse order, and specifying a particular field for sorting. This flexibility makes it suitable for diverse tasks, from alphabetizing lists to ordering numerical data. Combined with other command-line tools, sort becomes a powerful component in complex data processing workflows, enhancing efficiency and clarity;

Cutting and Pasting Text: `cut`, `paste`

The cut and paste commands offer basic, yet essential, text manipulation capabilities. cut extracts specific sections from each line of a file, defined by delimiters or character positions, enabling focused data retrieval. “Adventures with the Linux Command Line” highlights these tools for slicing and dicing text files;

Conversely, paste combines lines from multiple files horizontally. These commands, while simple, are incredibly useful when combined with other tools like grep and sort. They allow for precise data extraction and re-arrangement, forming the building blocks for more complex text processing tasks within the command line environment.

The `vi` Text Editor

vi, the world’s most popular text editor, is a powerful tool for file editing directly within the command line, as detailed in the guide.

Understanding vi’s modes is crucial for effective text editing. Initially, you enter Normal mode, used for navigating and executing commands – not for directly typing text. Pressing ‘i’ switches to Insert mode, allowing you to input and modify the file content.

To return to Normal mode from Insert mode, press the ‘Esc’ key. vi also features Command-line mode, activated by pressing ‘:’ (colon), enabling you to save, quit, or perform more complex operations. Mastering these modes is fundamental to efficiently utilizing vi, as highlighted in William Shotts’ guide, and overcoming initial “shell shock.”

Basic Editing Commands in `vi`

Within Normal mode, several commands facilitate editing. ‘x’ deletes the character under the cursor, while ‘dd’ removes an entire line. To undo the last action, use ‘u’. ‘yy’ yanks (copies) a line, and ‘p’ pastes it. Navigating is done with ‘h’ (left), ‘j’ (down), ‘k’ (up), and ‘l’ (right).

Remember to switch to Insert mode (‘i’) to type new text. Saving changes and exiting vi requires entering Command-line mode (‘:’) and typing ‘:wq’ (write and quit). As William Shotts explains, practice is key to becoming proficient with these commands.

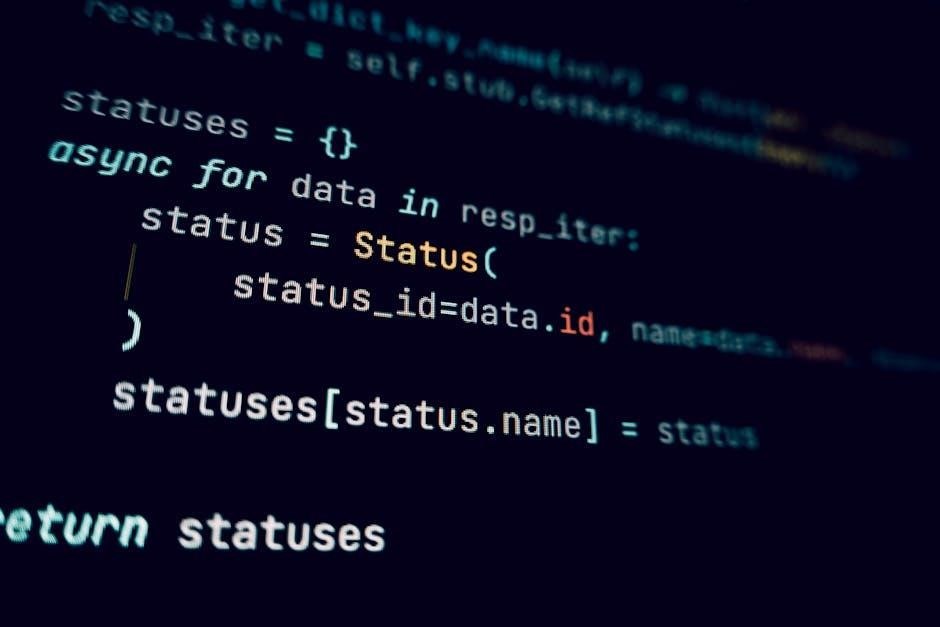

Shell Scripting Fundamentals

Shell scripts automate tasks, utilizing variables and control flow, as detailed by William Shotts, enabling efficient system administration and task repetition.

What is a Shell Script?

A shell script is essentially a text file containing a sequence of commands that the Unix shell can execute. As William Shotts explains, these scripts allow you to automate repetitive tasks, streamlining system administration and complex workflows. Instead of manually typing commands one by one, you can store them in a script and run them with a single command.

This is incredibly powerful for tasks like backing up files, managing system logs, or performing batch processing. Shell scripts are interpreted, meaning the shell reads and executes each command line by line. They are a fundamental part of the Linux ecosystem, offering a flexible and efficient way to interact with the operating system and manage its resources. Learning to write shell scripts unlocks a new level of control and automation.

Writing and Executing Simple Shell Scripts

Creating a shell script is straightforward: use a text editor to write a series of commands, one per line. Begin the script with a shebang line (#!/bin/bash) to specify the interpreter. Save the file with a .sh extension. As described by William Shotts, making the script executable requires using the chmod +x scriptname.sh command.

To run the script, simply type ./scriptname.sh in the terminal. This executes the commands within the script sequentially. Simple scripts can automate basic tasks, like creating directories or displaying messages. Mastering this process is a crucial step towards more complex scripting and system automation, offering efficiency and control.

Variables and Control Flow in Shell Scripts

Shell scripts leverage variables to store data, accessed using a dollar sign ($) before the variable name. William Shotts highlights the importance of understanding control flow structures like if statements, allowing scripts to make decisions based on conditions. for and while loops enable repetitive tasks.

These constructs, combined with commands, create dynamic and adaptable scripts. For example, an if statement can check if a file exists before attempting to process it. Loops can iterate through lists of files or perform actions a specified number of times, automating complex procedures efficiently.

Advanced Command Line Techniques

Mastering regular expressions and process management (ps, top, kill) unlocks powerful capabilities for system administration and complex task automation.

Regular Expressions for Pattern Matching

Regular expressions are a cornerstone of advanced command-line work, enabling powerful text searching and manipulation; They define search patterns, allowing you to locate specific sequences of characters within files or command output. Learning these patterns, handed down through generations of “gray-bearded gurus,” is crucial for efficient system administration.

These expressions allow for flexible matching, going beyond simple literal searches. You can define character classes, quantifiers, and anchors to precisely target the text you need. Tools like grep leverage regular expressions to filter data effectively. Understanding this skill unlocks the ability to slice and dice text files with precision, automating complex tasks and extracting valuable information from large datasets.

Process Management: `ps`, `top`, `kill`

Administering your system effectively requires understanding process management. Commands like ps provide snapshots of currently running processes, displaying their IDs, resource usage, and associated commands. top offers a dynamic, real-time view of system processes, sorted by CPU or memory consumption, aiding in performance monitoring.

When processes become unresponsive or consume excessive resources, the kill command allows you to terminate them. Proper process management is a vital skill for any Linux administrator, ensuring system stability and responsiveness. Mastering these tools allows you to control and optimize your system’s performance.